Verifiable Compute

Cryptographic evidence attached to every inference, binding execution context to machine-generated output through attested hardware and zero-knowledge proofs.

Aethelred is a verifiable compute network designed to help enterprises move AI into production with cryptographic evidence, policy-aware controls, and auditable execution.

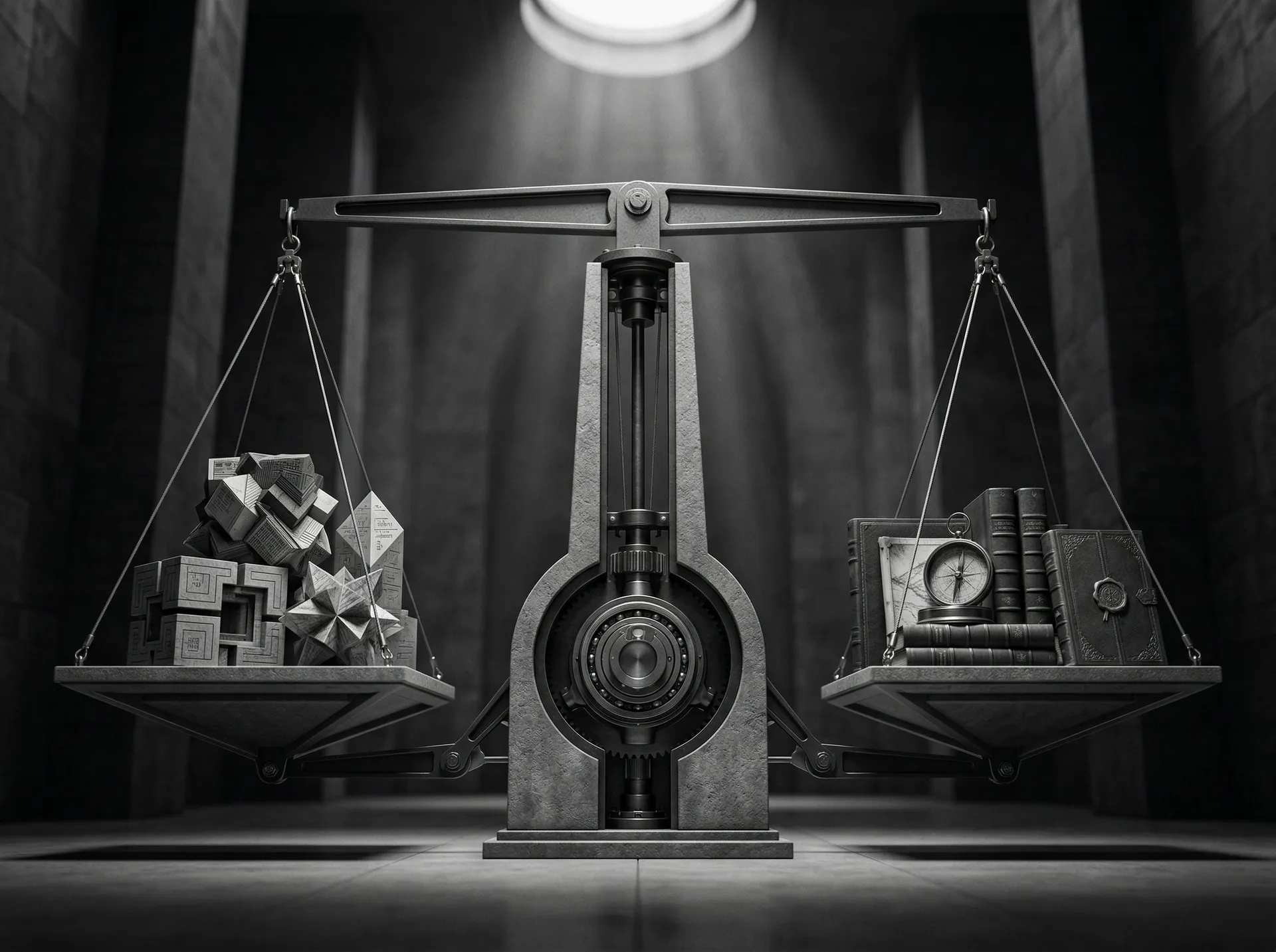

Today, most AI systems are powerful but opaque.

Aethelred is built for environments where performance alone is not enough — outputs must also be provable, governable, and ready for regulated deployment.

Cryptographic evidence attached to every inference, binding execution context to machine-generated output through attested hardware and zero-knowledge proofs.

Portable trust artifacts that bind execution evidence, verification signals, and policy-relevant metadata to machine-generated outputs.

Learn about Digital SealsPolicy-aware routing and evidence architecture for regulated jurisdictions, institutional requirements, and sovereign deployment corridors.

Aethelred combines deterministic settlement, attested compute, and policy-aware controls. Commit → Schedule → Attested Inference → Proof Generation → On-Chain Settlement → Digital Seal.

Aethelred is designed for environments where AI outputs must be more than useful. They must be provable, governable, and operationally acceptable.

Enterprise workloads follow a fail-closed hybrid path designed to make trust a required property, not an optional one. Production readiness depends on release bundles, operator rehearsals, and governed deployment discipline.

Validated partnerships, active pilots, and a professional validator network building the trust layer for AI-native systems.

Q1 2026

Core protocol deployment. PoUW consensus, TEE attestation, and basic validator operations.

CompletedQ2 2026

External validator onboarding. SDK release for Python, TypeScript, Rust, Go.

In ProgressQ3 2026

External security audit completion. Benchmark pack verification.

UpcomingExplore the architecture, review the use cases, and connect with the team building the trust layer for AI-native systems.